Term Frequency-Inverse Document Frequency (TF-IDF).Text Similarity - Jaccard, Euclidean, Cosine.You can find the accompanying web app here. You’ll also get to play around with them to help establish a general intuition. By the end, you'll have a good grasp of when to use what metrics and embedding techniques. Call TfidfVectorizer from sklearn.feature_extraction.text import TfidfVectorizer Let’s check the cosine similarity with TfidfVectorizer, and see how it change over CountVectorizer. from import cosine_similarityĬosine_similarity_matrix = cosine_similarity(vector_matrix)Ĭreate_dataframe(cosine_similarity_matrix,) doc_1 doc_2īy observing the above table, we can say that the Cosine Similarity between doc_1 and doc_2 is 0.47 Let’s compute the Cosine Similarity between doc_1 and doc_2. Scikit-Learn provides the function to calculate the Cosine similarity. Return(df) create_dataframe(vector_matrix.toarray(),tokens) data digital economy is new of oil theĭoc_2 1 0 0 1 1 0 1 0 Find Cosine Similarity import pandas as pdĭoc_names = ĭf = pd.DataFrame(data=matrix, index=doc_names, columns=tokens) Let’s create the pandas DataFrame to make a clear visualization of vectorize data along with tokens. Tokens Ĭonvert sparse vector matrix to numpy array to visualize the vectorized data of doc_1 and doc_2. tokens = count_vectorizer.get_feature_names()

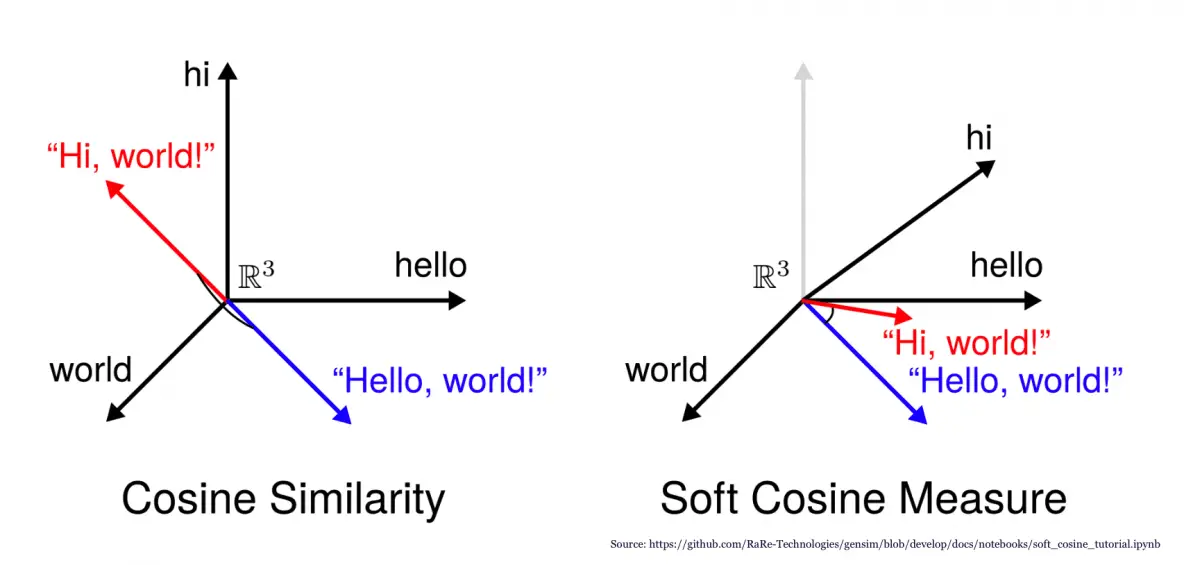

Here, is the unique tokens list found in the data. Let’s convert it to numpy array and display it with the token word. The generated vector matrix is a sparse matrix, that is not printed here. With 11 stored elements in Compressed Sparse Row format> Vector_matrix = count_vectorizer.fit_transform(data) doc_1 = "Data is the oil of the digital economy"ĭata = Call CountVectorizer from sklearn.feature_extraction.text import CountVectorizer Let’s define the sample text documents and apply CountVectorizer on it. TfidfVectorizer is more powerful than CountVectorizer because of TF-IDF penalized the most occur word in the document and give less importance to those words. Please refer to this tutorial to explore more about CountVectorizer and TfidfVectorizer. To count the word occurrence in each document, we can use CountVectorizer or TfidfVectorizer functions that are provided by Scikit-Learn library. The common way to compute the Cosine similarity is to first we need to count the word occurrence in each document. Let’s compute the Cosine similarity between two text document and observe how it works. The Cosine Similarity is a better metric than Euclidean distance because if the two text document far apart by Euclidean distance, there are still chances that they are close to each other in terms of their context. doc_1 = "Data is the oil of the digital economy" Let’s see the example of how to calculate the cosine similarity between two text document. The mathematical equation of Cosine similarity between two non-zero vectors is: The value closer to 0 indicates that the two documents have less similarity. If the Cosine similarity score is 1, it means two vectors have the same orientation. The Cosine similarity of two documents will range from 0 to 1. Mathematically, Cosine similarity metric measures the cosine of the angle between two n-dimensional vectors projected in a multi-dimensional space. The text documents are represented in n-dimensional vector space. A word is represented into a vector form. Please refer to this tutorial to explore the Jaccard Similarity.Ĭosine similarity is one of the metric to measure the text-similarity between two documents irrespective of their size in Natural language Processing. You will also get to understand the mathematics behind the cosine similarity metric with example. In this tutorial, you will discover the Cosine similarity metric with example. All these metrics have their own specification to measure the similarity between two queries. There are various text similarity metric exist such as Cosine similarity, Euclidean distance and Jaccard Similarity. Text Similarity has to determine how the two text documents close to each other in terms of their context or meaning.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed